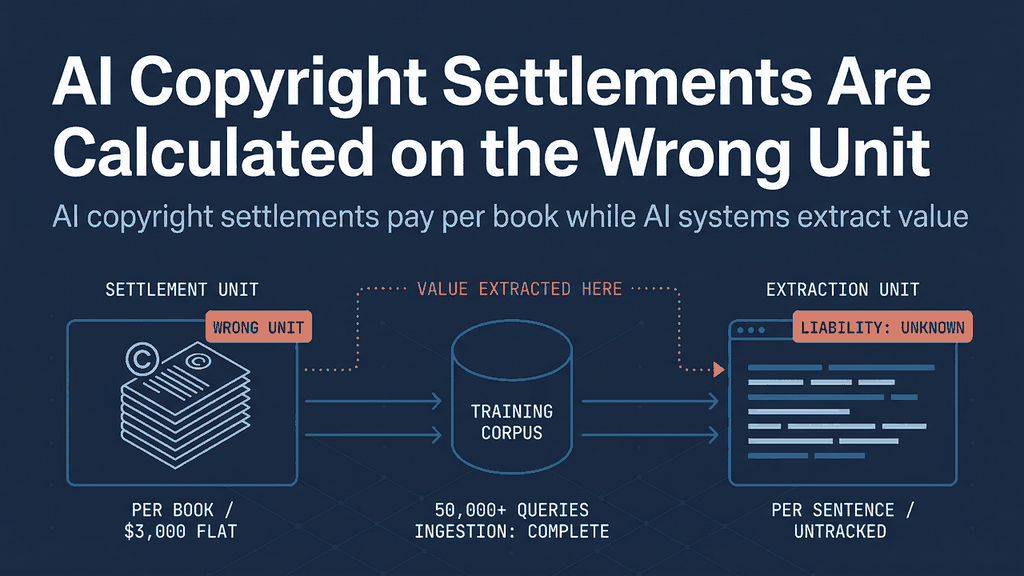

AI Copyright Settlements Are Calculated on the Wrong Unit

AI copyright settlements pay per book while AI systems extract value per sentence. Without sentence-level provenance, every future settlement will underpay rightsholders.

When Anthropic agreed to pay $3,000 per book in its $1.5 billion copyright settlement last September, publishers called it a landmark. 󠇟󠇠󠇡󠇢󠅚󠅉󠄍󠆫󠄒󠇔󠄐󠇗󠄪󠄭󠅡󠄅󠄙󠄡󠅶󠆘󠄨󠇫󠆨󠇪󠅨󠆜󠅡󠅁󠆳󠆲󠅞󠆩󠅸󠅷󠄛󠆢󠄳󠅌󠆗󠄵󠄅󠇎󠆜󠅞It was. 󠇟󠇠󠇡󠇢󠆭󠅠󠅴︅󠇙󠆱︍󠅚︈󠆜󠄵󠄝︄󠅂󠇕󠇀󠅟󠅬󠄢󠆭󠅠󠆓︆󠄈󠅦︎︍󠅥︋󠄧󠆾󠅘󠅔󠅻󠅊󠆺󠅕️󠄝󠅏The largest copyright settlement in American history demonstrated that training AI on copyrighted works carries real financial liability. 󠇟󠇠󠇡󠇢󠄾󠇕󠄉󠆛󠅋󠅰󠇚󠄏󠄋︍󠅫󠅲󠄯󠇩󠆶󠆼󠄒󠄯󠄔󠄋︇󠅠󠅄󠄌󠇀︈󠆁󠆟󠇙︂󠄩󠇨󠅤󠆫󠆪󠄯󠅒︆︌󠇗But the number itself reveals a structural problem that will shape every AI copyright negotiation for the next decade: a book that Anthropic's training pipeline queried 50,000 times received the same $3,000 as a book queried once. 󠇟󠇠󠇡󠇢󠆤󠄖︋󠆦󠆻󠆌󠇗󠆮󠄉󠄒󠇬󠄹󠅩󠆦󠄚󠅞󠅌󠇏󠅊󠄃󠇌󠅎󠄬󠆔󠇉󠆹󠇩󠄂󠄶󠆓󠅭󠅩󠄌󠅻󠆺󠆧󠆘󠄑󠅥󠇨The settlement compensates authors per work. 󠇟󠇠󠇡󠇢󠇔󠄺󠄌󠄧󠅬󠆈󠇜󠆫󠅥︇󠅱󠇙󠇯󠆤󠄍󠄹󠄉︎󠄌󠆮󠄮󠆊󠅏󠇋󠅇󠆺󠆮󠆚󠄉󠄼󠇨󠄦󠄷󠄛󠆖󠆭󠄙︅󠄏󠇠AI systems extract value per sentence. 󠇟󠇠󠇡󠇢󠇦󠄕󠇥󠇧󠆃󠆢󠄲󠅖󠆹󠆹󠆫󠅶︄󠆈󠆃󠄎󠄈󠇀︈󠅨󠅒󠅓󠆟󠆵󠅈󠄸󠄓󠆮󠆱󠆣󠄛󠆷󠇜󠄪︇󠇜󠄫󠆫󠅖󠇓Without infrastructure to track usage at the sentence level, the gap between what publishers are owed and what they collect will widen with every model generation.

󠇟󠇠󠇡󠇢󠅀󠇥󠅥︂󠆄󠅋󠅶󠇑󠆶󠅃󠅌󠅗󠇨󠅴󠆪󠆻󠆾️󠆈󠆚󠆠󠅼󠅶󠇌󠆫󠇬󠇅󠇔󠅂󠄸󠄮󠇢󠅞︀󠅜󠆊︇󠅟󠇙󠇥This post discusses legal developments for informational purposes only and does not constitute legal advice. 󠇟󠇠󠇡󠇢︀󠅍󠆑󠅿󠆷󠅿󠄵󠆬󠆽󠄎󠇫︇󠅚󠇔󠄦󠇑󠆻󠇙󠄞󠄇󠇦︊󠇌︊󠇅󠅛󠅓󠅽󠇙󠇄󠇐󠆮󠆊󠆺︋󠅼󠄭︋󠆠󠇗Encypher is a technology company, not a law firm. 󠇟󠇠󠇡󠇢󠇭󠇁󠆭󠅀󠇊󠆐󠅊󠅾󠆯󠆢󠇄󠇟󠆸󠆒󠇕︎󠅤󠅦󠄜󠅌󠅳󠅋󠅴󠅱󠅣󠅵󠅨󠆨󠄧󠄻󠇆󠅬󠄴󠅱󠆈󠅽󠇄󠄝󠄮󠅰Consult qualified legal counsel for advice specific to your situation. 󠇟󠇠󠇡󠇢󠅻󠅽󠄟󠅁︆󠇦󠅃󠄋󠅕󠇧󠄢󠇗󠄝󠄲󠆷󠄢︃️󠆘󠇄󠄅󠇑󠆜󠄐󠆑󠆜󠆙󠇫󠇋󠆨︆󠆠︃󠇢󠄞󠆅󠅽︄󠅪󠄖

The Unit Problem

The Anthropic settlement, known as Bartz v. Anthropic, covers an estimated 500,000 books drawn from shadow libraries including Library Genesis and the Pirate Library Mirror. 󠇟󠇠󠇡󠇢󠄑︃󠅛︂󠅄󠆤󠆁󠄅󠇁󠆞󠆓󠅌󠅠󠄝󠆊󠆛️󠅐︉󠆪󠄞󠅒󠅙︎󠆇󠅱󠆹󠇇󠇁󠆈󠄌󠄽󠆻󠇕󠅱󠅹󠅈󠆧󠇉󠄤The Authors Guild confirmed that rightsholders can expect at least $3,000 per title, less costs and fees, with the amount shared among rightsholders for a given title. 󠇟󠇠󠇡󠇢󠄳󠆆󠇨󠇢󠆒󠄐󠇅󠄮󠅵󠄺󠆛󠅖󠅮󠅾󠆧󠇞󠇏󠇫︋︌󠇮󠄩︄󠆀󠅻󠆥︎󠄄󠆢󠄹︅󠇉󠄋󠆙󠆮󠄎󠅨󠅺󠄮󠆠The claim deadline is March 30, 2026. 󠇟󠇠󠇡󠇢󠄭󠆭︍󠄃󠅵󠄌󠇔󠆂󠇞󠅓󠆩󠆷󠄂󠅟︈󠆙󠄴󠇘󠆢󠆕󠇁󠅔󠇤󠇋󠄋󠇐󠄷󠄁󠄢︍󠆆󠇯󠄎󠄫󠅂︇󠅯󠅹󠆓󠆑Final approval is set for April 23, 2026.

󠇟󠇠󠇡󠇢󠇂󠄤󠅘󠅁󠄆󠆿󠄡󠅁󠅣󠄏󠅅󠅐󠇪󠇚󠇏󠆱︈󠆭󠅺󠇑󠄝󠅺󠅽󠄡󠆛󠇄󠇐󠆋󠆨󠇠󠅝󠄫󠇠󠆆󠅈󠅦󠆧󠇆󠄔󠇓The settlement math works the way copyright settlements have always worked: per work, per ISBN, per ASIN. 󠇟󠇠󠇡󠇢󠆡󠅌󠅵󠄎󠇖󠄖󠄆󠆠󠄦󠅭󠄗󠆭󠄩󠅇󠅔󠄩󠇁󠆾󠇜󠇮󠅨︉󠆳󠆌󠆃󠄕󠆱󠅋󠆢󠇮󠆕󠇢︅󠄀󠄟󠅖󠆳󠅺󠄫󠆸Copyright law protects the work as a unit. 󠇟󠇠󠇡󠇢󠆲︆󠇔󠆿󠆡󠄓󠅇︁󠆼󠄛󠅖︉󠆎󠅧󠄉󠇧󠅵󠅀︉︋󠅐󠆏󠅅󠄿󠅿󠇃󠇐󠅶󠄟󠄪󠆐󠇭󠅒󠆵󠅒󠇍󠄑󠆊󠆉󠇎Damages are calculated per infringed work. 󠇟󠇠󠇡󠇢󠇆󠄅󠇟󠇍󠄍󠇫󠄚󠄸󠇇󠇏󠆣󠄍︍󠄓󠄨󠇌󠅒󠇖󠆾︆󠅈󠇐󠆿󠅦󠅕󠆶󠅗󠅁󠄅󠄆󠆓󠅹󠆶︍󠆄󠅡󠅅󠆺󠇟󠆦This made sense when the infringing act was reproduction - photocopying a book, pirating a film, uploading a song. 󠇟󠇠󠇡󠇢󠆚󠆴󠆈󠇒󠅳󠄘󠆧󠄦󠅏󠆯󠇬󠇡󠅿󠅺󠆴󠄽󠄰󠇖󠆸󠅼󠆫󠅑󠆮󠅊󠇧󠆾󠅼󠅨󠅛󠅂󠅷󠆄󠄤󠄧󠄓󠅄󠆷󠇥󠇓󠆱The copy was the unit of harm because the copy was the unit of value.

󠇟󠇠󠇡󠇢︍󠆬󠆮󠅥︁󠆦󠆔󠅜󠄴󠅔󠅭󠇑󠄭󠆜󠄕󠆽󠄃︄󠆩󠇍󠄡󠅅󠄈󠄒󠆗󠅝󠅞󠆨󠇉󠄮󠇮󠅆󠄟󠇎󠆣︉︂󠅠󠄀󠆇AI training does not work this way. 󠇟󠇠󠇡󠇢󠄯󠆦󠇅󠅺︍󠅨︄󠄼󠅘󠇛󠆦󠅯󠄒󠆭󠄾󠄮󠄔󠆭󠆳󠄿󠇕󠄡󠅲󠆛󠅕󠄄󠅪󠄌󠆐󠆃󠅘󠅬󠇠󠅻󠄽󠅱󠅄󠅐󠇑󠇤A large language model does not read a book the way a human does - sequentially, once, forming an impression. 󠇟󠇠󠇡󠇢󠄟󠇏󠆀󠅚󠅷󠅢󠇑︊󠆰󠄓󠄓󠄒󠆎󠅯󠅹󠆞󠆾󠄞󠅆󠆻󠄇︂󠆱️󠆘󠄽󠆕󠇪󠇜󠅪󠅈󠇮󠆺󠆘󠄄󠇁󠆌︎󠆻󠇒Training passes individual sentences through gradient descent millions of times. 󠇟󠇠󠇡󠇢󠇀󠇖󠇦󠄆󠄯︃󠄛󠅖󠅫󠅓󠆲󠅆󠇬󠅷󠅶󠄓󠇘󠅡󠄙󠆁󠆟󠇩󠅙󠅴︈󠇂󠅦󠄐󠄲󠆥󠇠󠇮󠆟︇󠄨󠇎󠅬󠆢󠅨󠇒Each pass adjusts model weights by a small increment. 󠇟󠇠󠇡󠇢󠅧󠆉󠆎󠆎󠇚󠇍󠄐󠅬󠅬󠄸󠇃󠄅󠅃󠄌󠄹︎󠇤󠄣󠆦󠅫󠇄󠅦󠅄󠅲󠆆󠆊󠇎󠇔󠄕󠅖󠄳󠇫󠇥󠇆󠇚󠄤󠇙󠇇󠆤󠆫A single sentence from a medical textbook that precisely describes a drug interaction may contribute more to a model's capability on that topic than an entire chapter of general background. 󠇟󠇠󠇡󠇢󠇆󠅸󠅢󠄂󠄌󠅯󠄅󠄶󠆚󠄆󠅺󠄚󠆎󠇥󠆷󠄒󠄷󠅶󠅨󠄿󠅣󠇭󠆪󠅒󠄪󠅀󠅛󠄒󠅗󠇨󠅈󠆇󠄄󠆧󠅗󠅎󠆊󠄭󠄴󠄦The economic unit of value extraction is the sentence or the semantic pattern, not the book.

󠇟󠇠󠇡󠇢󠇙󠄻󠇋󠇑󠄏󠆑󠄦󠆥󠄘󠅘󠅬󠄒󠄀󠄴󠄢󠆀󠄢󠅳󠄴󠄩󠄲󠅎︃󠆡󠄒󠇄󠇦︃󠅧󠄀󠅤󠆂󠆉󠅷󠇚󠆇󠆿︉󠅊󠅂The settlement's per-work structure means there is no mechanism to account for this differential. 󠇟󠇠󠇡󠇢󠆋󠇋󠇁󠅿󠄉󠆄󠇥︀󠅓󠄂󠅤󠄌󠄱󠅿󠄐󠇘󠆙󠅀󠆻󠅒󠆝󠅓󠄑󠄮󠆐󠇓󠇈󠅚︀󠄛︎󠄗󠄎󠇙󠅅󠄐︁󠅤󠆮󠄲An author whose prose style was heavily extracted - whose sentences appeared in training data repeatedly and shaped the model's outputs on specific topics - receives the same flat fee as an author whose book was ingested once and contributed negligibly to any capability. 󠇟󠇠󠇡󠇢󠆏󠆵󠅖󠄎󠄓󠅶󠄐󠆦󠄜︄󠄻󠆃󠆿󠆕󠅇󠇖󠅊󠄠󠅖󠆁󠇛󠅿󠆅󠄷󠅣󠅴󠇋︁󠅎󠇬󠅶󠆺󠆯󠅈󠆝󠆥󠆚󠇔󠅤󠄇The $1.5 billion total is large. 󠇟󠇠󠇡󠇢󠄠󠇏󠆬󠄛󠇎󠄳󠅫󠄀󠅾󠆦󠅏󠆮󠄦󠆲󠄝󠅸󠅫︁󠆨󠆎󠇆︂󠆚󠅔󠆈󠆯󠄇󠅼󠅞󠇚󠇥󠄢󠆇󠅽󠄲󠅎󠅖󠆀︎󠄱The per-unit allocation is blind.

󠇟󠇠󠇡󠇢󠅼󠅽󠄶󠇭󠄴󠆐󠅠󠄞󠄈󠆞󠇄󠅺󠇉󠄱󠄈󠆒󠇓󠅅󠆐󠅨󠄤󠄔󠆈󠆨󠇕󠆢󠄎󠆿󠄯󠅣󠇂︅󠄷󠇚󠇯󠄋󠄅󠄟󠄸󠆢This is not unique to the Anthropic settlement. 󠇟󠇠󠇡󠇢󠆷︊󠆿󠆥󠅀󠄗󠇇󠆍󠄁󠆭󠅡󠆑󠆑󠇉󠄔󠆝󠄺󠇍󠇟󠄻󠆍󠆪󠅀󠇗󠅜󠅧󠅓󠅒︇󠅵󠄦󠆲󠅛󠇬︎󠅞󠆼󠆗󠄺󠄎The licensing deals that have followed - OpenAI with News Corp, OpenAI with the Associated Press, UMG with Udio - are also structured per outlet, per publication, negotiated in bulk. 󠇟󠇠󠇡󠇢󠇌︎󠆙󠄷󠇂󠄈󠆩󠆅󠆆󠆬󠆑󠆣󠆱󠄑󠄡󠅡󠇉󠆘󠆟󠆚󠄉󠅶󠇍󠇎󠄤󠆚󠄅󠆒󠄾󠇓︄󠄖󠄝󠆠󠇋󠄂󠇆󠄋󠅆󠆳None of them price usage at the sentence level. 󠇟󠇠󠇡󠇢󠆔󠄫󠅁󠄤󠆪󠇨󠇤󠆫󠆇󠅳󠄹󠆤󠅞󠆕󠄴󠅏󠅉󠆾󠇎󠆼󠆜󠆪󠅁󠇪︎󠇡󠆀󠆅︄󠆁󠇋󠆚󠄟󠄷󠇝󠄿︈󠇦󠆄󠄸None of them account for which specific sentences contributed to which specific model capabilities.

󠇟󠇠󠇡󠇢󠅿󠇨󠆂󠆑︆󠅉󠆅󠅵󠆰󠄚󠇕󠆝󠇖󠅻󠅩󠇦󠄴󠇞󠄻󠆢󠄟󠇁󠆛󠅯󠆞󠆂󠄓󠄈󠅒󠅉󠇜󠇁󠄘󠄗󠇢󠇘󠆫󠇒󠄆󠅙Why Retroactive Attribution Fails

The obvious response is: measure it after the fact. 󠇟󠇠󠇡󠇢󠇘󠆔󠆽󠄻󠄘󠅏󠆟󠆶󠅳󠆳󠅥󠆬︎󠄸󠆌󠆮󠆢󠄹󠆑󠇧󠆌󠇟󠆋󠅷󠅮󠅥󠅟󠆑󠄆󠄺󠇔󠆸󠆏󠆭󠄶󠄡󠇪󠄨󠆖󠅯Go into the training data, find which sentences from which books were used how many times, and allocate accordingly.

󠇟󠇠󠇡󠇢󠄣󠆒󠄧󠆦󠄀󠆫󠆄󠅫󠆎󠄭󠄦󠇭󠆓󠄄󠄭󠆓󠄀󠄙󠆑󠄒󠇉󠅏️󠅸󠅛️󠅮󠆟︌󠇧󠆈󠆒󠄮󠅫󠅯󠇞󠆥󠇪󠅏󠄎This does not work. 󠇟󠇠󠇡󠇢󠆱󠅍󠄈󠇐︉󠄳󠇁󠄜󠇈󠇧󠄐󠆊󠄪󠆾󠆩󠆌󠅡󠆽󠄢󠄖󠄸󠅡󠅒󠅹󠆠󠅏󠆮󠄇︁󠆨󠄭󠄧󠄖󠆴󠇤󠅀󠇉󠅂󠄃󠆶According to Sterne Kessler's analysis of AI discovery disputes, plaintiffs reviewing one of OpenAI's training datasets were forced to abandon searching for a single copyrighted work because the search alone would have taken more than six hours. 󠇟󠇠󠇡󠇢󠅁󠆳󠅂󠆾󠅆󠄲󠄓󠄇󠄅󠇅󠅯󠅾󠅨󠆿󠄮︈󠅅󠇈󠅨󠇆󠇘󠆪󠅃󠄩󠆕󠅍󠄽󠆔󠆕󠆼󠆝󠄡󠄷󠇃󠄯󠅣󠄜󠇡󠅸󠇜One work, one dataset, six hours. 󠇟󠇠󠇡󠇢󠄤󠇖󠄐󠅧󠄗󠄓︂󠅀󠄕󠄧󠇗󠇖󠄤󠄄󠇏󠄸󠅂󠄧󠅈󠅯󠇏󠇝󠆘󠆽󠄧󠅘󠆑󠆛󠄤󠇥󠇙󠆦󠄨󠄻󠄨󠄥󠅢󠆟󠆑󠆛The training corpora for frontier models contain billions of documents across hundreds of datasets. 󠇟󠇠󠇡󠇢󠆉󠄼︋󠇧󠇖󠅞󠅋󠅵󠅕󠅮󠇔󠅚󠄇󠇚󠇝󠄞󠇌︀󠆦󠇜󠅲󠆯󠄃󠅟︈︃󠄬󠇗󠆉󠇇󠅣󠇞󠅖︀󠅸󠇧󠅋󠄿󠆡󠆜Locating every sentence from every copyrighted work in that corpus is not a staffing problem. 󠇟󠇠󠇡󠇢󠅄󠆰󠅣󠆳󠆫󠆦󠆻󠄪󠆿󠅩󠄻󠅬󠇟󠆦󠆃󠇌󠇩󠅥󠅦󠅽󠇆󠅿󠅈󠆿󠆈󠅂󠅸󠅄󠇫󠄒󠄶󠄣󠄉󠄒󠆧︉󠅆󠄻󠆾󠄊It is a computational problem that scales faster than any litigation budget.

󠇟󠇠󠇡󠇢󠅌󠅴󠄷󠅒󠅙󠇎󠆴󠇁󠆨󠆬󠅴󠆖󠄲󠅑󠅿󠅺󠅺︊󠄮︅󠆘󠄨󠇩󠅞󠇀󠄔󠄚󠅛󠅺󠄿󠅸󠆦︇󠄃󠄒󠅫️󠇂󠄊󠄗Even if the search were feasible, finding a sentence in a training corpus does not tell you what it contributed to the model. 󠇟󠇠󠇡󠇢󠅻󠅙󠆿󠄭󠆶󠄶󠅧󠅐︃󠇒󠆲󠄭󠅑󠆶󠅡󠄌󠄟󠆀󠇝󠅵︄󠅿󠇄󠅁󠄬󠅺󠇛󠄳︉󠄇󠇇󠆄󠆑󠆟󠄍󠆆󠆱󠅛󠄪󠇌Training is a statistical process. 󠇟󠇠󠇡󠇢󠆜󠆕󠆜󠅍󠅪󠄧󠅕󠆡󠄁󠄎󠄾󠆎󠆾󠄀󠆑󠅍󠆚󠇐︅󠆎︅󠇁󠇑󠇦󠇢󠄚󠅶󠆔󠇫󠅌󠆫󠇬󠄞󠆰󠅓󠅳󠄏󠆃󠅞󠅨A sentence's influence on model weights depends on the learning rate at the time it was processed, the other sentences in the same batch, the number of epochs it appeared in, and the model's state at each pass. 󠇟󠇠󠇡󠇢󠆋󠆞󠄸󠄊󠆔󠅬󠆡󠄍󠅛󠄫󠅅󠄲󠅠󠅖󠆓󠇃󠆻󠇃󠅷󠅯󠅕󠆰󠇐󠇪󠆢︎󠆓󠆼︍󠄡󠄎󠅥󠇪󠄉󠅆󠅮󠄇󠄥󠅟󠅟Tracing a specific output back to a specific training sentence is an open research problem in machine learning interpretability - not something a forensic expert can testify to in court today.

󠇟󠇠󠇡󠇢󠇖󠆉󠄯󠄌󠅞󠅲󠅩󠅳󠅂󠇝󠅽󠆽󠆂󠆏󠄉󠆉󠇟︌󠆄󠅗󠅡󠄃󠅄󠄭󠇪󠇡󠇏󠄕󠄊󠆗󠅥󠆛󠆻󠅥󠆨󠄎󠆲󠄱󠄯󠄅The flat fee follows from the absence of granular attribution infrastructure; there is no other unit available to measure. 󠇟󠇠󠇡󠇢󠇨󠄁󠇧󠆥󠆐󠄑󠆿󠄯󠆭󠇝󠄆󠅖󠅆󠄮󠄹󠄗󠄈󠄥󠇩󠄃󠄝󠆱󠅶󠇀󠄼󠇅󠆅󠅟󠅙󠅇󠆧󠇟󠄎󠆹󠇌󠆲󠅊󠅜󠅮︅Publishers know which books were in the corpus. 󠇟󠇠󠇡󠇢󠆾󠆿󠇠󠇍󠇒󠅇󠇘󠇍󠇊︄󠄭󠆌󠄳󠆧󠆑󠄍󠄶󠇒󠆹󠄝︆󠅹󠆐󠆺󠆜󠄤󠆎󠄓󠆙󠄚󠅿󠆿󠅔󠆐󠆆󠅜󠅜󠄱󠆗󠅜They do not know - and currently cannot know - which sentences from those books drove which capabilities.

󠇟󠇠󠇡󠇢󠅧󠄳󠆄󠅰︊󠇞󠇑󠅂󠆪󠇪󠇨󠅂󠄦󠇞󠄂󠆰󠆫󠄪󠆌󠇯︀󠆌󠄥󠅪󠄽󠆼󠅅󠅿󠇐󠄇󠄯󠇉󠄢󠄞󠆝󠇡󠇙󠅀󠄲󠅇󠇟󠇠󠇡󠇢󠄌󠄘󠇦󠆨󠇒󠅡󠇥󠆑󠅓󠆖󠆬󠅛󠄩︆󠄅󠆠󠇆󠆆󠆁󠄠󠄀󠇘󠄩󠅸󠅡︎︌󠅢󠅐󠆣󠅿󠆪󠄕󠄺󠆨󠇒󠄢󠆬󠅦󠆧The Counterargument: Settlements Are Working

The strongest case against this thesis is simple. 󠇟󠇠󠇡󠇢󠅘󠆎󠅤󠅴󠄇󠄕󠆩󠄃󠄊󠄙󠇪󠇝󠇈󠄰󠅆󠆖󠅃󠅱󠅘󠆵󠆵󠆞󠄬󠅝󠆳󠅲󠆨󠄈󠆳󠆬︄󠆤󠇧󠅤󠆾󠅼󠆛󠄆󠄬󠇩Publishers have won. 󠇟󠇠󠇡󠇢󠄟󠆆󠅲󠆌󠆉󠅖󠅏󠆂󠆉󠆑󠄕󠆨󠄾󠅊󠆤󠆥󠇃󠇌󠆦󠇆󠇄󠇮󠄚󠄎︄󠇩󠇝󠅾󠆢󠇝󠇣󠅕󠆒󠅼󠅣󠇭󠆎󠆅󠇋󠅣The Anthropic settlement is $1.5 billion. 󠇟󠇠󠇡󠇢󠄏󠅢󠄋󠇍󠇀󠆰󠇪󠆐󠇡󠅮󠄲󠅜󠆆󠄺󠄸󠆲󠅗󠇖󠆢󠅰󠅟︈󠆁󠄀󠄫󠆕󠆹󠄵󠇇󠆒󠄝︋󠄟󠇤󠆔󠇘󠇄󠄼︃︎AI companies have shown willingness to pay substantial sums to resolve copyright claims over training data. 󠇟󠇠󠇡󠇢󠄲󠄎󠅈󠅒󠅴󠅅󠄧󠆾󠆣󠆹󠅂󠇘󠄶󠅧󠆧󠆍󠆒󠇮󠇈󠅟󠆂󠅜󠅕󠅘󠆎󠅙󠇐󠇬󠄴󠅒︂󠅛󠅝󠅙󠇥󠅡󠆇󠄚󠅞󠅪Licensing deals are forming across the industry. 󠇟󠇠󠇡󠇢󠇖󠆆󠄔󠆬󠆠︊󠇑󠅄󠆪󠄍󠆓󠅤󠆔︈󠇘󠆕󠇟󠅐󠄐󠅩󠅫󠅯󠆜󠇪󠅕󠆬󠅒︉󠇚︎󠇬󠆵󠆨󠆮󠄡󠆫󠆋󠅣󠅇󠆞The system is working - imperfectly, as all legal systems do, but the direction is correct. 󠇟󠇠󠇡󠇢󠅳󠆌󠇠󠄕︀󠆴󠇞󠅩󠇩󠅏󠅒󠆅󠇕󠆋󠆹󠇊󠄒󠄼󠄾󠇚󠄣󠅕︃󠄧󠇔󠅝󠅷󠅳󠆟︎󠅇󠇯󠄲︂󠄸󠄸󠄚︆󠆆󠅣Incremental improvements to settlement math are secondary to the primary achievement of showing that AI companies will pay for content.

󠇟󠇠󠇡󠇢󠇓󠆰󠅶︆󠇖󠆥󠇚󠇨󠄋󠄖︎󠄤󠅿󠇞󠄪󠅣󠇚󠅁󠅆︁󠇒󠆤󠅀󠅱󠆀󠄲︅︄󠄂󠇣󠄄󠄤󠄪󠄱󠄲󠆀󠅓󠅎󠄏󠄯This argument is right about the past and wrong about the future. 󠇟󠇠󠇡󠇢󠄵󠆢󠄪󠆀󠅐󠅓󠆒󠄇󠄰󠆼󠆂󠅩󠇗󠆖󠄺󠅨󠆲󠄆󠄌󠅀󠇝󠆊󠄇︍󠅩󠅓󠇮󠇈󠄼󠅼󠇥󠄚︂󠅌󠅅󠅊󠇪󠅼󠆌󠆂Demonstrating that AI companies will pay substantial sums to resolve copyright claims was the necessary first step, and publishers achieved it. 󠇟󠇠󠇡󠇢󠅹󠅑󠅴󠅔󠅫󠇭󠆲󠆖󠇝󠄏󠅦󠇉󠆰󠄐󠆅󠆂󠄳󠆼󠇯󠇌󠄌󠇇󠆟󠅱󠄴󠇥󠅲󠄑󠄠󠅋󠆌󠅑󠆎󠇂󠄌󠄂󠆄󠄽󠅩󠅯But the compensation structure that emerged from this first wave of settlements is now the baseline for every negotiation that follows. 󠇟󠇠󠇡󠇢󠅍󠄚󠅞󠅹󠅤︆󠅩󠄭󠅽󠅑󠆗󠅉󠆎󠅶󠆱󠅗󠇃󠄽󠇤󠇨󠇈󠄕󠇧󠆮󠆊󠅸󠅈󠅧󠄊󠄕󠅲󠅎󠆏󠇦󠅴︇󠆭󠅬󠄬󠇕AI companies have a strong incentive to maintain per-work pricing: it caps their exposure regardless of how intensively they use any given work. 󠇟󠇠󠇡󠇢󠅷󠅌󠆭󠄤󠇯󠄝󠅳󠅈󠆷󠆿󠄋󠆪󠆦󠅾󠅦󠆥󠆅󠆥︋󠄉󠄊󠆗󠇧󠅝󠆼󠇓󠅫︆󠄯󠅦󠅒󠄯󠆋󠄦󠄯󠆅󠅤󠆍󠇊󠅞Publishers who accept per-work deals are locking in a rate that bears no relationship to the actual value their content provides to AI systems.

󠇟󠇠󠇡󠇢󠅪󠄉󠅒󠄙󠅺󠄻󠅺󠄁󠄼󠇬󠅯󠅀󠆃󠆑󠆫󠆼󠅈󠄗󠆋󠆌󠇫󠆕󠇏󠆪󠆇󠆩󠄤󠆾󠄗󠄃󠇪󠄕󠆾󠅩󠅠󠇯󠇛󠄖󠄰󠅑The parallel to early music streaming is instructive. 󠇟󠇠󠇡󠇢󠇖󠆵󠄒󠄔󠅹󠅉󠅭󠇆󠅧󠆹󠆔󠄣󠄋󠇞󠇜󠆷󠄺󠇟󠄿󠄊󠇯︍󠄏󠆖󠅛︇󠅌󠇎󠆐󠄛󠅷󠄕󠆘󠄱󠇩󠄩󠅡󠆅󠆇󠅿When Spotify launched, record labels negotiated flat licensing fees per catalog. 󠇟󠇠󠇡󠇢󠄦󠇏󠄗󠇌󠆸󠄀󠆇󠆸󠇫󠄏󠇟󠄉󠇌󠆒󠅨󠄂󠆠︀󠇌󠅆󠅘󠅙󠄟󠄹󠄉󠆡󠇐󠅊󠅒󠄍󠇛️󠄮󠅀󠆹󠅛󠄚󠅱󠄈󠄨The streaming service paid the same whether an album was played a million times or never. 󠇟󠇠󠇡󠇢󠄐󠆪󠇬󠅹󠆘󠆒󠄙󠆎󠆭󠇬︄󠆸󠅦󠇗󠄭󠅞󠆔󠇣󠅊󠇪󠅧󠅥󠅈󠄞󠄡󠄟󠆳󠅽󠆒󠅋󠆷︇󠅮󠄌󠆕󠅛󠆈󠄜󠄻󠆔It took years of infrastructure development - per-stream tracking, reporting systems, royalty allocation algorithms - before the industry moved to per-stream royalties that reflected actual usage. 󠇟󠇠󠇡󠇢󠄑󠆛󠄼󠅾󠅛󠄦󠄨󠅤󠆋󠄴󠆟󠄎󠆀󠅉󠄒󠅯󠄜󠆭󠇑󠆬󠄺󠆜󠄔󠆸󠆌󠅣︈󠆉󠇛󠄾󠇭󠆓󠇤󠆌󠅦󠄟󠅥󠆟󠅑󠅚The labels that built tracking infrastructure earliest were the ones that captured value when the pricing model shifted.

󠇟󠇠󠇡󠇢󠇟󠅋󠆩󠄃󠆁︈󠆱󠆠󠅥󠆮󠄊︄︀󠅏󠄠󠅬󠄤󠆌󠇇󠄩󠆲󠄔︊󠆂󠇕󠅀󠆗󠇚󠆷󠇥󠅧󠅵󠅇󠄫󠆽󠆺󠆹󠇟󠄳󠄮AI licensing is at the flat-fee stage now. 󠇟󠇠󠇡󠇢󠄽󠇔󠄅󠅊󠅶󠇙󠇁󠇤󠆉󠆍󠅟󠆬󠆌︃󠆙󠅲󠅞󠄲󠅱󠄔󠆮󠅇󠄸󠇗󠄄󠆸󠇞󠇦󠄥󠄔󠆍󠅭󠆾󠆌󠅖󠇥︇󠅨󠄫󠆠The question is whether publishers will build the tracking infrastructure before or after the pricing model changes.

The Technical Answer: Sentence-Level Provenance

The infrastructure gap is specific. 󠇟󠇠󠇡󠇢󠄼󠄲󠆥󠄊󠅤󠄽󠆡󠅇󠄳󠇕󠅱󠅜󠆞󠇣󠄌󠆛󠅺󠆟󠆵󠄐󠇉󠄸󠄉󠆖󠇘󠄐󠄈󠅸️󠇀󠅶󠅈󠆘󠆶󠆽󠇖󠆽󠇩󠇇󠆾At the moment content enters an AI pipeline - whether for training, retrieval, or fine-tuning - the system needs to know, at the most granular level possible, who created each piece of content and under what terms it can be used. 󠇟󠇠󠇡󠇢󠆎󠆱󠅴󠇘︎︎󠄭󠅷󠆗︇󠄂󠄱󠅿󠄡󠄶󠆐󠇖󠆧󠄽󠄕󠆢︋󠅶︍󠅍󠄾︊󠆁󠆞󠇨︃󠇍󠄷󠄢󠄋󠆫󠅤󠇠󠄢󠄺That information must travel with the content itself, because content passes through crawlers, APIs, data pipelines, and preprocessing systems before it reaches the training or retrieval layer. 󠇟󠇠󠇡󠇢󠄕󠇗󠇑󠅶󠅯󠅞︌󠄅󠄋󠇤󠄌󠅨󠄏󠄿󠄁󠆹󠅈󠅔󠄕󠆪󠄈󠄽󠅋󠅩󠄆󠅓󠅛󠅞󠄎󠄓󠇐󠄠󠄭󠄱󠆢︃󠇮󠆱󠇍󠆸Metadata stored in a separate system - a CMS, a licensing database, a robots.txt file - is not present at the point where the AI system makes its ingestion decision.

󠇟󠇠󠇡󠇢󠅮󠅟󠇢︉󠄪󠅪󠅆󠆛󠇞󠅡󠇀󠆔󠇎󠅃󠇏󠅧󠅅︆󠆣󠇇󠇀󠄫󠆮󠆟󠄰󠄯󠄓󠄜󠅡󠄿󠆈󠇫󠇒󠇉︍󠆫󠅉󠄦󠄼󠇇C2PA Specification 2.3, published January 8, 2026, addresses this for text. 󠇟󠇠󠇡󠇢󠄨︎󠆘󠅚󠇞󠆌󠇜󠅝󠅂󠄰󠄴󠇡󠆈󠅋󠄱󠅔󠅵󠆘󠅰󠆰󠅅󠇓󠇞󠆍󠅡󠅄󠇌󠄙󠆦󠇚󠇑󠄸󠇊󠄐󠄊󠆋󠆤󠅮󠅣︉Section A.7, "Embedding Manifests into Unstructured Text," defines how cryptographically signed provenance manifests are embedded directly into text content using Unicode Variation Selectors - non-rendering characters that travel with the text through copy, paste, excerpt, and redistribution. 󠇟󠇠󠇡󠇢󠇣󠇬󠆇󠅺󠆅󠅿󠆒︎󠅠󠄏󠇒󠅜󠄃󠅚󠄥󠅄󠅌󠇋󠅬󠅰󠅠󠇀󠄖󠇞󠄴󠄰󠆀︌󠇡󠆇︅󠆝󠆥󠅑󠇚󠆐󠅒󠆱󠇞󠆻We co-authored Section A.7 as co-chairs of the C2PA Text Provenance Task Force, working with review from Google, OpenAI, Adobe, Microsoft, the New York Times, BBC, and AP through the C2PA consortium.

󠇟󠇠󠇡󠇢󠇛󠄥󠄨󠇆󠄳󠆱󠆶󠅏󠇭󠇓󠇚󠆽󠇜󠇫󠆽󠆛󠇀󠄽󠇅󠅲󠅂󠄂󠄈󠅇󠆆󠆸󠅋󠅼󠅿󠆮󠄯󠇥󠄮󠇀󠆝󠅗︄󠆂󠅛︍C2PA Section A.7 defines embedding at the document level - a single signed manifest covering the document as a whole. 󠇟󠇠󠇡󠇢󠅥󠅶󠄣󠄎󠇋︇󠅋󠇍󠆱󠅜󠄠󠄪󠆣󠆆󠄀󠆠󠆪󠅣󠇜󠆐󠅮󠅰󠄵󠆽󠄱󠅈󠇋󠆵󠄑󠆱󠄍󠄍󠇂󠄾︈󠇓󠄫󠆋󠆦󠇃Encypher's technology, built on this standard, extends provenance to the sentence level: each sentence carries its own markers binding authorship, licensing terms, and permitted uses to that specific text. 󠇟󠇠󠇡󠇢󠅽󠅋󠅛󠇨︉󠅗󠆞󠄖󠇯󠅙󠅘󠇉󠆞󠄺󠆅󠆷󠆍󠇀︄󠆛󠅍󠄗󠆔󠄨󠄂󠆷󠅐󠆱󠄀󠆔󠆮󠅿󠅕󠆁󠇘󠄑󠆇󠇪󠆘󠄯When any of those sentences enters an AI training pipeline, the markers travel with it. 󠇟󠇠󠇡󠇢󠄱︆︀󠅙󠅈󠅠󠄽󠄄󠄛󠇘󠇚󠅂󠅛󠄯󠄆󠇗󠆄󠄷󠇎󠆁󠆽󠆬󠅹󠅣󠄇󠆖󠄧󠆲󠅁󠆞󠅅󠆚󠅡󠆳󠄫󠆏󠄝󠇐󠅄󠅍The AI company's ingestion system can read the credential, check the licensing terms, log the usage, and - critically - produce an auditable record of what was used and under what authorization.

󠇟󠇠󠇡󠇢󠄫󠄆󠅰󠅢󠇢󠆠󠆏󠇢󠅕󠆒󠆤󠅋󠇡󠇎󠄴󠄻󠆙󠄪󠇡󠄽󠅻󠄮󠅔︄󠇪󠄆󠅵󠇓󠄪󠆙︀󠄉󠄬󠆃︌󠆐󠆡󠅠󠇚󠄚This changes the economics of attribution. 󠇟󠇠󠇡󠇢󠅷󠆛󠇚󠄗󠅫󠅽󠇛󠇆󠆼󠄀︅󠆂󠄓󠆺︁󠇮󠅍󠄇󠄱󠆬󠄱󠇔󠄍󠄮󠆩󠄊󠅝󠆑󠄀󠆆󠅵󠅆󠇐󠄞󠄖󠆾󠄘󠆀󠇖︄With sentence-level provenance, a settlement or licensing agreement can be structured around actual usage: how many sentences from a given author's work appeared in training, how many times, in how many batches. 󠇟󠇠󠇡󠇢󠄷󠄛󠆺󠆲󠅽󠇝󠅺󠇀󠄸󠄶󠄗󠄆󠅆󠅇󠄱󠇟󠆡󠄴󠆧󠄰󠆅󠅬󠅺󠅜󠆗󠅼󠆦󠅿󠄨󠅙󠇖󠅪󠅾󠅸󠇐󠄘󠄷󠆛󠆮󠅰The flat per-work fee becomes a floor, not a ceiling. 󠇟󠇠󠇡󠇢󠆣󠆩󠆽󠄹󠅝󠅋󠇩󠄽󠅭󠅐︌󠇥󠅉󠇋󠆕󠄇󠄦󠆿󠄏󠆖󠄋󠄐󠆊󠅂󠆔︄󠅊󠅏󠇯󠅳󠆯󠇙󠆑󠄫󠅆󠆩︁󠆬󠅔󠄸Publishers with provenance infrastructure have a negotiating position grounded in data. 󠇟󠇠󠇡󠇢󠄚󠄷󠄑󠆦󠅪󠆎󠆚󠆳󠇈󠆞󠄽󠆱󠄑󠇏󠆒︈󠅅󠄗󠄹󠄥󠆲󠆡󠅎󠆈󠄻󠅽󠄩󠄁󠆄︁󠆝󠇇󠅰󠆴󠇇󠅲󠄷󠆋󠅫󠇅Publishers without it have a number that an AI company offered.

󠇟󠇠󠇡󠇢󠆌󠆦󠅎︌󠆵󠄌󠆢󠇛󠇡󠅫󠇃󠄑︇󠆣󠆵󠇟󠅴󠇣󠅮󠇓󠇐󠄾󠄵󠆤󠆾︌󠄦󠅦󠇧󠆙󠄠󠄆󠅜︌󠄊󠄒󠅇󠄹󠅧󠅐The Regulatory Tailwind

The European Commission's second draft Code of Practice under EU AI Act Article 50, published this month, specifies a two-layered marking approach for AI-generated content involving secured metadata and watermarking. 󠇟󠇠󠇡󠇢󠄷󠆙󠅼󠅿󠆜󠅘󠄑󠆃󠆭󠆿󠆪︉󠇔󠇡󠆝󠄊󠅻󠅪󠄙󠇏󠅘󠆮󠅌󠅤󠅌󠄩󠇥󠇜󠆼󠅎󠄥󠆯󠅡󠄓󠆼󠆫󠄴󠇆󠅥󠆗The feedback period closes March 30, 2026. 󠇟󠇠󠇡󠇢󠅴󠅁󠇞󠇜󠄸󠇏󠆠󠅰󠇅󠆖󠄞󠄚󠅒󠇯󠄬󠇆󠄌󠆇󠅧󠄱︀󠇠󠅕︉󠆟󠄬󠄷󠄖󠆃󠄒󠆦󠄛󠄒󠆲󠇬󠅦󠄑󠄦󠅭󠅿The final code is expected in June 2026. 󠇟󠇠󠇡󠇢󠅄󠆥󠇦󠇦󠅮󠆒󠇗󠇮󠄺󠅨󠆪󠄡󠇛󠄋󠄣︄󠇀󠅐󠅬󠆖󠆕󠆎󠄀󠄳󠅡󠅚󠅗󠄫󠅁󠄄󠇋󠇈󠅫󠅄󠆊󠆖󠅕󠄵󠆽󠆗Article 50 obligations become enforceable on August 2, 2026.

󠇟󠇠󠇡󠇢󠄞󠄵󠄡󠅊󠆑󠆇󠇋󠅘󠅕󠇑󠇛󠇀󠇆󠇤󠄩󠅡󠇗󠆛󠆜︂󠆁󠆥󠄿󠄤󠆲󠆥󠄐󠆂󠅥󠇢󠆞󠅗︎󠇕󠆭󠇏󠄴󠇋󠄁󠅑Machine-readable provenance signals are becoming mandatory on the output side. 󠇟󠇠󠇡󠇢󠅾󠄎󠄬󠄪󠄷󠅻󠅣󠆡󠄆󠅘󠄣󠄡󠅎󠆑󠄺󠅐󠇉󠆊󠆰󠅹󠆫󠄷󠄾󠄤󠆌󠇃󠄾󠄔󠅑󠇞󠄡󠅦󠇡︉󠅧󠅒︀󠅖󠆛󠅠AI companies will be required to mark their generated content with metadata identifying it as AI-produced. 󠇟󠇠󠇡󠇢󠆇󠇦󠄷󠄧󠄕󠅨󠇇󠇞󠆧󠆟󠇋󠄇󠇠󠅴󠅍󠅲󠆟󠇩󠆭󠄩󠆶󠅛󠄁󠄱󠄥󠆘󠄮󠅥󠅮󠅨󠅣󠄝󠆋󠆡︂󠆀󠅪󠆑󠄱󠅹The parallel infrastructure on the input side - machine-readable signals embedded in source content that declare authorship and licensing terms - remains voluntary. 󠇟󠇠󠇡󠇢󠄘󠆦󠅦󠅡󠆨󠇀󠆎󠆿󠅂󠄨󠅝󠆰󠇮󠄏󠅍󠅝󠄾󠅏󠇇󠅊︀️󠅝󠄂󠅇󠅕󠆦󠅡󠄴󠄄󠄠󠆤󠅝󠄋󠆙︉󠆂󠄾󠇗󠆆Publishers who build that infrastructure now will be aligned with the regulatory framework as it extends from output marking to input attribution. 󠇟󠇠󠇡󠇢󠆤︄󠆋󠆲󠆻󠇦󠄕󠅹︌󠇊󠅐󠇭󠆷󠆂󠅇󠅬󠆒󠆀󠆡󠆔󠅧󠅯󠆡󠇧󠅏󠇇󠅅󠇤󠇜󠄬󠅮︇󠇏󠄉󠄊󠆝󠅳󠇮󠅾󠄖The EU's TDM opt-out provisions under the Digital Single Market Directive already reward publishers who can demonstrate machine-readable rights reservations. 󠇟󠇠󠇡󠇢󠄗󠇘󠅵󠇄󠇜󠄘󠄉󠇚󠄏󠆋󠇈󠆯󠅵󠅖󠄪󠇐󠄢󠇜󠄨󠄇󠆨󠄝󠄚󠆌󠅍󠅀󠄖󠄓󠆉󠅊󠅟󠅈󠄉󠇐󠇎󠅘󠅠󠄮󠅨󠄔C2PA manifests provide exactly that signal.

󠇟󠇠󠇡󠇢󠆊󠇄󠄀󠆐󠆔󠇦󠅘󠅡󠆍󠇡󠇎󠆔󠄣󠅬󠇕󠆠󠅃󠇪󠄰󠅵󠆚󠇪󠄏󠅬󠄰󠄭︍󠄺󠇧󠅢󠇪󠆵󠄁󠇘󠄉󠄓󠆣󠄁󠇩󠇮󠇟󠇠󠇡󠇢󠅾󠇤󠄖󠄤󠄒︍︈󠆬󠆖󠄇󠅚󠅣󠇢󠅌󠄕󠆯󠆙󠅗󠄁󠇟󠇅󠄘󠇞󠇬󠅖󠇛󠄎︇󠆜󠆊󠄫󠇈󠅡󠄑󠅔󠆁󠅲󠄔󠆵󠄧What This Means for the Next Settlement

The Anthropic settlement will be approved or modified in April 2026. 󠇟󠇠󠇡󠇢󠆼󠆹󠇣󠇏󠄧󠅽󠇕︉󠄊󠆿󠇁󠆡󠇦󠆹󠅢󠄌󠅮󠆥󠄈󠅵󠇏󠄳󠄸󠅯󠄜󠆔󠆃󠆽󠄟󠄖󠆡󠅑󠅐︌󠄵󠆡󠄨󠆙︀󠅐The next major AI copyright dispute - and there will be one - will negotiate against this precedent. 󠇟󠇠󠇡󠇢︍󠇠󠄪󠅆󠆊󠇮󠄢️󠇭󠆖󠄁󠆃󠅓󠇯󠄴󠅓󠅚󠆲󠇔󠅤󠅐󠄾󠇩︃󠄕󠄡󠄹󠄧󠅡󠄨󠄍󠅑󠅗󠆷󠇓󠇪󠆘󠇍󠅼󠅉If the per-work unit persists, a publisher whose content was used in training a model that generates $10 billion in annual revenue will still collect a flat fee per title that bears no relationship to the revenue their content helped produce.

󠇟󠇠󠇡󠇢󠇫󠆦󠅣󠄬󠄪󠇭󠄎󠄜󠅬󠇑󠇒󠇉︈󠅏󠆎󠄒󠄭󠅕󠇜󠅭󠄏󠆁󠅈󠆔󠆀󠅫󠄼︁󠆝󠅷󠅽󠄼󠇦󠅫󠆨󠇐󠆔󠆴󠄳󠆪The alternative requires infrastructure. 󠇟󠇠󠇡󠇢󠆝󠇎󠆵󠇩󠆚󠆊󠄪󠄀︎󠆸󠅨󠄺󠆌󠄨︆󠇑󠄜󠅊󠅅󠇅󠄙󠄎󠇋󠆃󠆙󠅂󠅅󠆍󠄫󠅂󠆗󠆇︁󠆜󠆡󠄀󠄶󠄰󠇗󠇜Sentence-level provenance - the capability Encypher has built on top of the C2PA text embedding standard - creates the audit trail that makes usage-based compensation possible. 󠇟󠇠󠇡󠇢󠅉󠅼󠆃󠄐󠇂󠅤󠆺󠆱󠆵️󠆱󠇬󠆉󠇛󠇍󠆷︅󠄐󠆭󠅍󠅂󠇣󠇋󠅀󠇥󠆕󠆹󠆃󠆧󠆃󠇒󠅚󠅌󠇘󠅾󠄯︍󠇐󠇌󠄙It does not guarantee per-sentence royalties - that requires legal frameworks that are still developing. 󠇟󠇠󠇡󠇢󠇕󠄜󠄬󠇅󠆨󠅺︎󠅏󠇜󠅭󠆫󠅷󠅘󠆁󠅾󠅻󠇁󠇮󠄽󠄵󠄧󠄓󠇒󠅓︄󠅎󠇡󠆐󠄐󠄬󠅸󠅛󠅮󠆾󠆃󠄝󠇑︆󠆗󠄊But it is the prerequisite. 󠇟󠇠󠇡󠇢󠆿󠅞󠆌󠇁︀󠅶󠅸󠇊󠅒󠅚󠄡󠄋󠅓︉󠅫󠅳󠇌󠄃󠆯󠅠󠇘󠄣󠇖󠅣󠄋󠄖︄󠄋󠄞󠅆󠄰󠇍︊󠇊󠅩󠅦󠅨󠄷󠇘︉Without the measurement infrastructure, the legal framework has nothing to measure.

󠇟󠇠󠇡󠇢󠆈󠅚︉󠄜󠆁󠆓󠄕󠇖󠇖󠄑󠄶󠄕󠆩󠄃󠇢󠄠󠄅󠄥󠆕︊󠅍󠇢󠄀󠇮󠆽󠇑󠅞󠆫󠅣󠆉󠆮󠇆󠆅󠆺󠄁󠇯󠅎󠇁󠅅󠄤The Anthropic settlement demonstrated that AI companies will pay to resolve publisher claims. 󠇟󠇠󠇡󠇢󠆗󠆏󠆑󠅀︃󠆐󠄬󠅎︆󠄌󠅙󠅁󠇟󠄢󠄬󠆆󠅓󠄗󠇘󠆢󠄮󠇙󠆇︃󠇡󠇊󠆰󠅰󠅓󠆉󠆚󠆻󠅆󠅛󠅷︄󠆂󠇬󠅯󠅏The open question is whether the next settlement will compensate publishers based on what their content is actually worth to the model - or whether it will be another flat check, divided equally among works that contributed unequally. 󠇟󠇠󠇡󠇢󠅯󠅍󠄢󠇪󠆶󠄂󠅌󠄅󠅙󠄉󠅄󠆷󠄜󠇎󠄐󠅜󠄧󠇙󠆏󠇌󠆛󠆝󠆈󠇒󠆽󠆩󠇂󠆰󠇋󠇡󠆚󠇅︆󠅽󠄺󠆋󠆺󠄸󠆛󠅻The answer depends on whether publishers have the provenance data to make the case. 󠇟󠇠󠇡󠇢󠄣󠇔󠅨󠆟󠆇󠅸󠆶󠇙󠆷󠇈󠆕󠄘󠄃󠄷󠅮󠅡󠄋󠅕󠆾󠇗󠅳󠄠󠅟󠇢︃󠆧󠆶󠄸󠆷󠇗󠅻󠇑󠅓󠆽󠄃󠆧󠇓󠅗󠇎󠆐That data does not exist retroactively. 󠇟󠇠󠇡󠇢󠅺󠄧󠅱󠅌󠄿󠆐󠅘󠅨︇︌󠅡󠅳󠇅󠆫󠆱󠄮󠇥︀󠅢󠆗󠆜󠆶󠄃︂︃󠄠󠅤󠇁󠆒󠄮󠆜󠆩󠇄󠄘󠄫󠅉󠆒󠇕󠅓︉It has to be embedded now, before the next training run.

󠇟󠇠󠇡󠇢󠅭󠆄󠄈󠄌󠄰󠅉󠄹󠆧󠄴󠄮󠅑󠄮󠄆󠅏󠄤󠇫󠆦󠄣󠅆󠆓󠅢󠅺󠅺󠅖󠅁󠆼󠄔󠇭󠄘󠄙󠇟󠅕󠄭󠅞󠅋󠇝󠄔︃󠇧󠄧

Get the weekly Encypher briefing

Analysis of AI copyright, content provenance, and publisher rights - written from inside the C2PA standard-setting process. No filler.